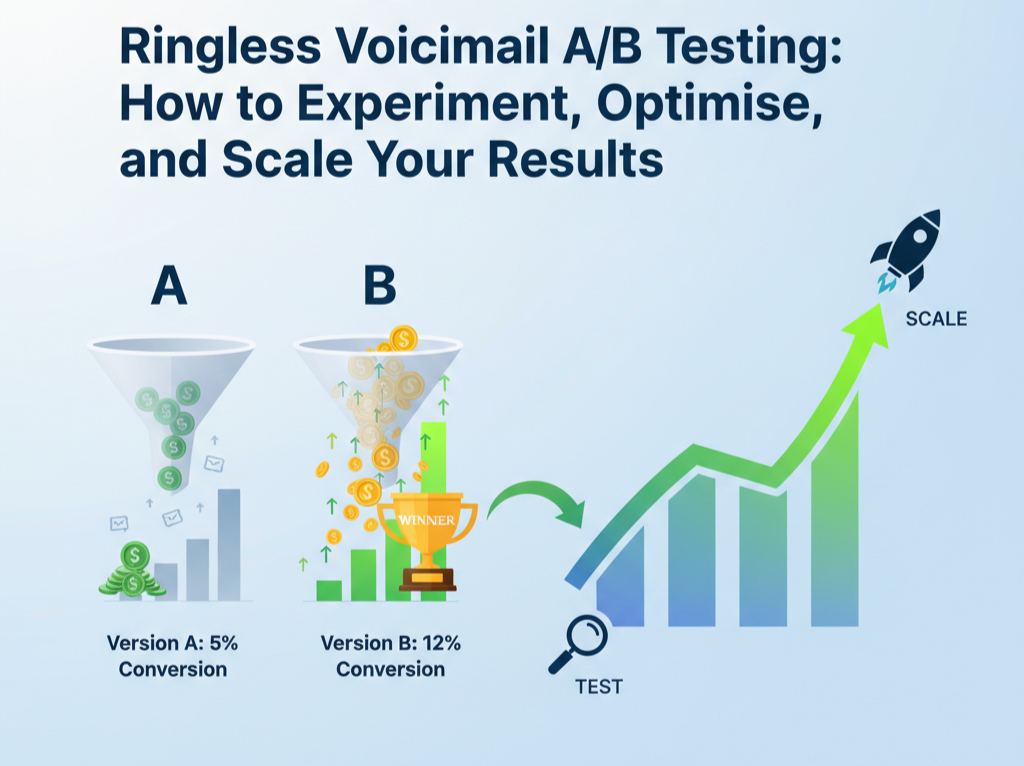

A/B testing is one of the most effective ways to improve your ringless voicemail (RVM) performance. Instead of guessing what works, you use real data to compare two variations of a message, delivery time, or audience segment and choose the option that performs best. When done correctly, A/B testing helps you improve response rates, cut costs, and scale campaigns more confidently.

If you’re already using ringless voicemail for lead generation, customer engagement, or retargeting, testing should become a core part of your strategy. In this guide, you’ll learn how to run tests, what to measure, how to interpret results, and how to scale winning versions across your entire outreach system.

Why A/B Testing Matters

Ringless voicemail is powerful because it reaches customers without interrupting them. But results vary depending on script, tone, timing, and segmentation. A/B testing removes assumptions by showing exactly what your audience responds to. Businesses that test regularly usually experience:

- Higher callback and response rates

- Lower cost per lead

- Better customer engagement

- Improved message relevance

- More efficient scaling

If your campaigns feel inconsistent or unpredictable, A/B testing helps uncover which elements move the needle.

What You Can Test

Almost every part of a ringless voicemail campaign can be tested, but the most impactful categories fall into five groups: script, voice style, timing, targeting, and offer. Each one influences how your message is received and how likely someone is to respond.

Scripts & Messaging

The script is the heart of your voicemail. Testing different versions lets you understand which approach resonates best. You can adjust:

- Greeting style (formal vs. casual)

- Message length

- Opening hook

- Value proposition

- Call-to-action phrasing

- Urgency vs. non-urgency tones

Even small changes in the first sentence can significantly influence engagement, so scripts are usually a great place to start.

Voice Style & Delivery

Voice characteristics matter because listeners respond to tone, pacing, and personality. Test:

- Male vs. female voice

- Fast vs. slow delivery

- Conversational vs. authoritative tone

- Sincere vs. energetic style

- Local accent vs. neutral accent

Audiences respond differently depending on age, region, and industry testing helps identify the best match for your brand.

Send Time & Frequency

Timing can dramatically change performance. Test:

- Morning vs. afternoon

- Weekday vs. weekend

- Immediate send vs. delayed follow-up

- Frequency (single voicemail vs. sequence)

What performs well for B2C might not work for B2B, and winter behaviour can differ from summer ongoing testing keeps your timing on track.

Audience Segmentation

Segmentation tests explore which groups respond better. Try testing:

- New leads vs. warm contacts

- Past customers vs. dormant users

- Geographic areas

- Buyer personas

- Behavioural groups (clicked email, visited site, etc.)

Better segmentation often leads to higher conversions with fewer messages sent.

Offers & Incentives

Your offer is often the biggest driver of conversions. Test variations such as:

- Discounts vs. free trials

- “Limited-time” vs. “exclusive access”

- Direct CTA (call back) vs. indirect CTA (visit link)

Even changing the framing of the offer can boost conversions.

How to Set Up an A/B Test

A structured approach ensures your tests are valid and your results reliable. Follow this six-step process:

1. Define a Clear Goal

Your test should answer one specific question, such as:

- “Does changing the opening sentence increase callbacks?”

- “Does a female voice outperform a male voice in engagement?”

Keep it simple: one change per test.

2. Choose One Variable at a Time

Changing too many elements at once leads to unclear results. For example:

- Not two different scripts + new send time

- Not voice + CTA + offer

Only one variable should change between version A and version B.

3. Split Your Audience Randomly

Your audience should be divided evenly and randomly. This eliminates bias and ensures clean data. Make sure the sample size is large enough to reveal meaningful trends.

4. Launch Both Variants Under Similar Conditions

Send A and B during the same timeframe so external factors don’t influence outcomes. If you send Version A in the morning and Version B in the evening, timing becomes a confounding variable and the test becomes unreliable.

5. Measure the Right KPIs

The metrics you track depend on your goal. Common RVM A/B testing metrics include:

- Listen rate

- Callback rate

- Response rate (SMS, call-back, site visit)

- Conversion rate

- Cost per response

- Drop-off points

Tracking the wrong KPI can lead to poor decisions, so match metrics to your test’s purpose.

6. Document and Apply the Results

Record what you tested, the results, and why one version won. This documentation creates a library of insights that strengthens future campaigns. Apply the winning variation across your next campaign and continue iterating.

Tools That Support A/B Testing

Many modern RVM platforms offer built-in testing tools, automation, and analytics. When selecting a platform, look for features such as:

- Split testing functionality

- Real-time performance dashboards

- Audience segmentation

- Script storage and version tracking

- Integration with CRM and marketing tools

If you’re using an advanced tool like RinglessVoicemail.ai, features such as automated analytics and performance insights can help speed up testing and simplify scaling.

Common A/B Testing Mistakes

Even experienced marketers fall into these traps. Avoid them to keep your results accurate.

Testing Too Many Variables

If multiple elements change at once, you won’t know which one affected the results. Stick to testing one variable at a time.

Small Sample Sizes

If your audience is too small, results won’t be statistically meaningful. Larger tests produce clearer, more dependable insights.

Ignoring External Factors

Holidays, seasonal behaviours, or industry events can influence responses. Comparing two tests from different weeks may not reflect the variable you tested.

Misaligned KPIs

Make sure the KPI matches the goal. If you test timing but only measure conversions, you won’t get the full picture.

Stopping a Test Too Early

Early results can be misleading. Allow enough time for the campaign to reach a significant percentage of the audience.

Scaling Your Winning Results

Once you’ve identified winning variations, the next phase is scaling. Scaling multiplies the impact of your improvements and applies what you’ve learned across your marketing ecosystem.

Expand to a Larger Audience

Roll out the winning variation from your test to your full list. Monitor performance to ensure it holds up at scale.

Create Iterative Tests

Build on your winning version to find even more gains. For example:

- If Script B beats Script A, test Script B vs Script C

- If morning delivery wins, test different morning hours

- If one voice style works, test energy levels or tone

A/B testing is a cycle, not a one-time event.

Integrate Learnings Into Your Multi-Channel Strategy

Use insights from your ringless voicemail tests to improve:

- SMS follow-ups

- Email sequences

- Retargeting ads

- CRM workflows

Automate Scaling With Technology

Modern platforms let you automate:

- Multi-step outreach sequences

- Auto-optimisation based on engagement

- Dynamic audience segmentation

Automation ensures consistent performance without manual effort.

FAQs

What is A/B testing in ringless voicemail?

A/B testing involves sending two different versions of a voicemail message such as different scripts, tones, or sending times to separate audience groups to see which performs better. It helps you optimise engagement, response rates, and conversions using real data instead of assumptions.

What should I test first in a ringless voicemail campaign?

Start with the highest-impact elements:

- The script and opening line

- The call-to-action

- The tone or voice style

These usually deliver the fastest performance improvements. After that, test timing, segmentation, and offer variations.

How long should an A/B test run to get accurate results?

Run your test until both versions reach a statistically meaningful sample size. For large lists, this might only take hours; for smaller lists, it may require several days. The key is allowing enough audience interactions to ensure the results are reliable.

Why should I test only one variable at a time?

Testing more than one change at once such as a new script and a new voice tone makes it impossible to know which variable influenced the results. Keeping tests isolated ensures clean, actionable insights that can be scaled confidently.

What metrics should I measure in A/B testing?

Track metrics that match your goal. The most important KPIs include:

- Listen rate

- Callback or response rate

- Conversion rate

- Cost per lead

- Engagement across follow-up steps

Using the right KPIs helps you understand what truly improves campaign performance.

A/B Testing Is the Key to Dominating Ringless Voicemail Marketing

A/B testing is the fastest and most reliable way to improve your ringless voicemail campaigns. By testing one variable at a time, tracking meaningful metrics, and scaling your winning variations, you can continuously improve engagement and conversions. Over time, your messaging becomes sharper, your audience segmentation becomes smarter, and your overall marketing performance strengthens.

With the right testing strategy and tools, RinglessVoicemail.ai can help you make your campaigns far more predictable, profitable, and scalable, keeping you ahead in an increasingly competitive digital landscape. Ready to take the next step? Contact RinglessVoicemail.ai today to start optimising your campaigns.